How Automated Reasoning checks in Amazon Bedrock transform generative AI compliance

16 avril 2026

Compliance teams in regulated industries spend weeks on manual reviews, pay for outside consultants, and still face audit gaps when AI outputs lack formal proof. Automated Reasoning checks in Amazon Bedrock Guardrails address this by replacing probabilistic AI validation with mathematical verification, turning AI-generated decisions into provably correct, auditable results.

In this post, you’ll learn why probabilistic AI validation falls short in regulated industries and how Automated Reasoning checks use formal verification to deliver mathematically proven results. You’ll also see how customers across six industries use this technology to produce formally verified, auditable AI outputs, and how to get started.

The compliance challenge

Regulated industries face high-stakes compliance challenges. Hospitals navigate radiation safety regulations. Financial institutions classify AI risk under the EU AI Act. Insurance carriers answer coverage questions where incorrect responses carry regulatory consequences. Manual review, costly consultants, and legacy processes don’t scale.

Many teams building generative AI or agentic solutions reach for a large language model (LLM)-as-a-judge pattern: using a second LLM to evaluate the first model’s outputs. While intuitive, this approach carries a fundamental limitation: one probabilistic system validating another cannot provide the formal, auditable guarantee that regulated industries require.

How Automated Reasoning checks deliver provable compliance against a defined set of rules and constraints

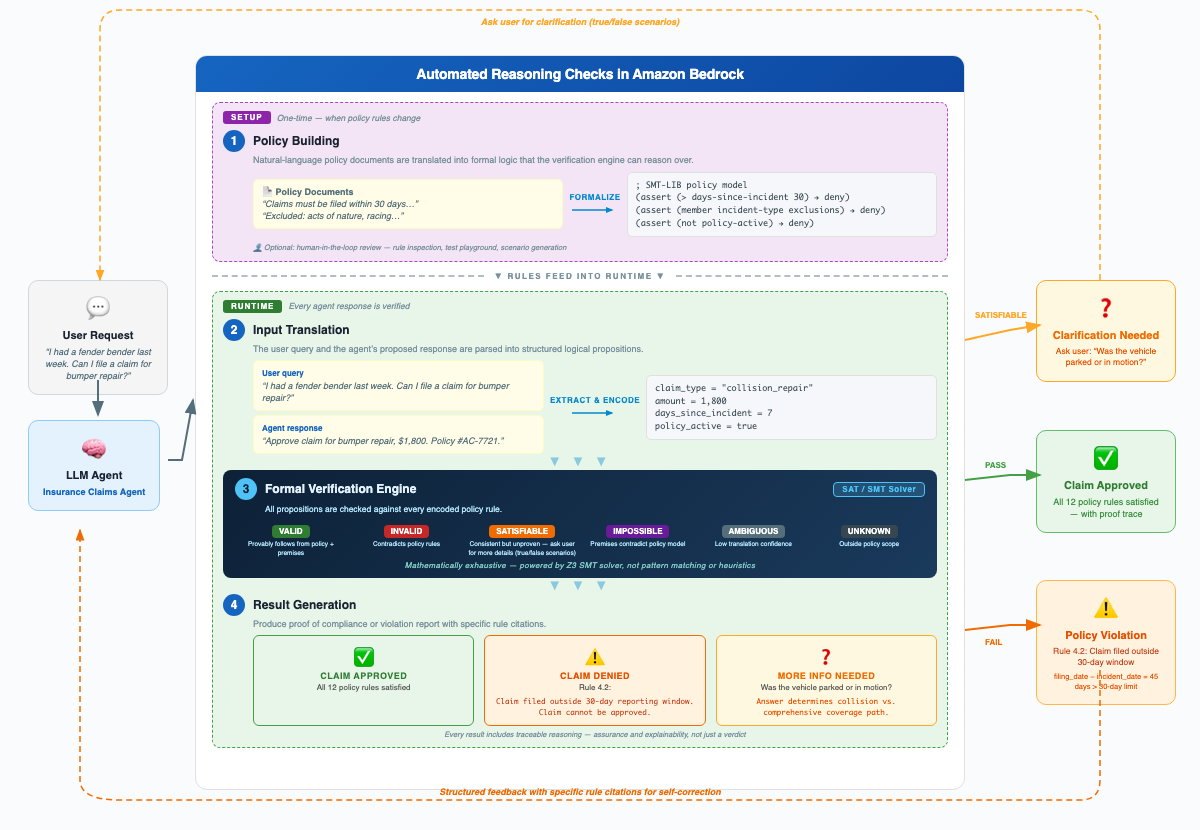

Automated Reasoning checks in Amazon Bedrock Guardrails apply formal verification methods, grounded in mathematical logic, to validate AI-generated outputs against a defined set of rules and constraints. You get a provably correct, auditable assessment for every request.

Consider the following example. An AI assistant tells a customer their insurance claim is covered. With an LLM-as-a-judge approach, a second model reviews that answer and says “looks right.” With Automated Reasoning checks, the system mathematically proves the answer is consistent with every rule in the policy. If rules are violated, it identifies exactly which ones and why.

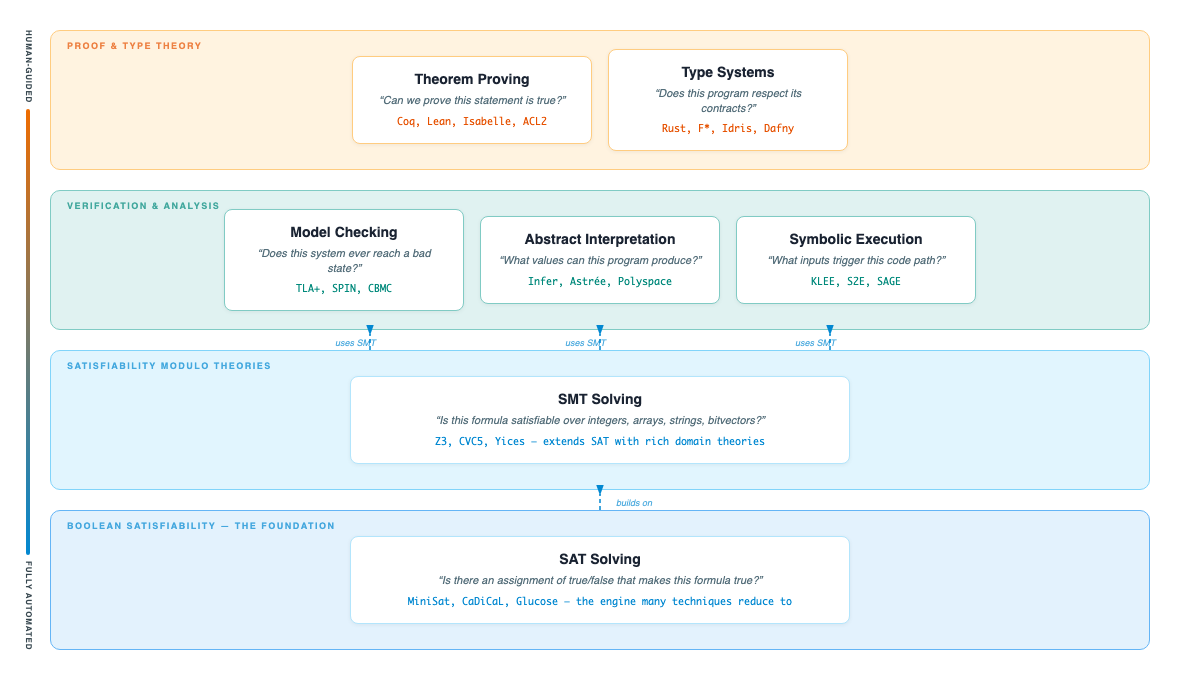

Figure 1: Automated Reasoning taxonomy, including Theorem Proving, Type Systems, Model Checking, Abstract Interpretation, Symbolic Execution, SMT Solving, and SAT Solving. SAT and SMT solving form the foundation of Automated Reasoning checks

Automated reasoning develops algorithms that automatically derive logical conclusions from given premises. It draws on decades of research in formal verification (mathematically proving a system meets its specification), satisfiability solving (determining whether a logical formula can be satisfied), and mathematical logic.

These same foundations verify hardware designs, prove cryptographic protocols sound, and pinpoint exactly where safety-critical software violates its specification. Automated Reasoning checks now apply them to generative AI.

The checks combine neural networks with logical reasoning to validate AI outputs against defined rules and constraints, transforming probabilistic responses into formally verified, auditable artifacts. AWS offers Automated Reasoning checks as one of several responsible AI tools to help you safeguard your AI applications.

For a detailed walkthrough of how to configure Automated Reasoning policies and see verification in action, see Minimize generative AI hallucinations with Amazon Bedrock Automated Reasoning checks.

Figure 2: Automated Reasoning checks in Amazon Bedrock, showing the 4-step process: Policy Encoding, Output Translation, Formal Verification Engine, and Result Generation.

Industry applications

Organizations across healthcare, finance, energy, insurance, and education use Automated Reasoning checks to verify AI outputs and explain compliance decisions with audit-ready evidence.

Operational engineering: Amazon Logistics

The Amazon Logistics team reduced engineering review time from approximately 8 hours to minutes while receiving formal compliance verifications on every determination. Amazon Logistics’ Sustainability Engineering team leads the deployment of Electric Vehicle Charging Points (EVCPs) across Amazon’s delivery station network. Each installation proposal needs to meet region-specific regulations and internal technical specifications. Previously, each review required a subject matter expert to spend approximately 8 hours manually cross-referencing engineering parameters.

Working with AWS, the team built a generative AI-assisted design review portal powered by Automated Reasoning checks. The portal translates technical specifications into Automated Reasoning policies, including precise logical rules with explicitly defined variables, types, and conditions. It validates engineering parameters extracted from proposals using formal mathematical reasoning. Claude in Amazon Bedrock powers the document intelligence layer, extracting and structuring data from unstructured proposals.

“Our experts remain the decision-makers, with complete visibility into how the tool operates and the confidence that every recommendation can be traced, verified, and validated.”

– Paula Garcia Carrasco, Sr. Sustainability Engineer, AMZL

Subject matter experts now focus on engineering judgment rather than tedious parameter matching. Learn more in the Amazon Logistics case study

Financial services: Lucid Motors and PwC

Lucid Motors reduced forecast generation from weeks to less than one minute and scaled 14 AI use cases across the enterprise in only 10 weeks. Lucid Motors, the electric vehicle manufacturer, partnered with PwC and AWS to build an AI-native finance forecasting and analytics solution. The finance team faced a familiar challenge: forecasting cycles that took weeks of manual effort, limiting their ability to respond to rapidly changing market conditions.

Together, PwC and AWS built machine learning (ML)-based forecasting agents on Amazon Bedrock. The team applied Automated Reasoning checks as a formal verification layer to mathematically validate that model outputs adhered to predefined financial rules and constraints. This approach catches logical inconsistencies that probabilistic AI alone might miss.

The finance team now actively shapes business decisions in real time rather than waiting weeks for reports.

“Together with PwC and AWS, Lucid is turning its cloud environment into a platform for innovation… PwC’s team rapidly built forecasting tools to reduce manual efforts from weeks to less than a minute.”

– Aditya Baheti, Head of Business Finance, Lucid

Read the Lucid Motors case study.

Education: First Education & Technology Group (FETG) and PwC

FETG achieved up to an 80% reduction in rule-setup effort, a 50% reduction in ongoing compliance overhead, and response latency optimized from 8–13 seconds to 1.5 seconds. First Education & Technology Group (FETG), operator of the MarsLadder AI learning system, partnered with PwC and AWS to build a responsible AI governance layer for student-facing generative AI. Traditional moderation approaches, keyword filters, and probabilistic classifiers failed to reliably enforce the Safer Technologies 4 Schools (ST4S) framework where context and intent matter.

PwC implemented Automated Reasoning checks as a deterministic validation layer, translating ST4S principles into ten formal logic rules covering data protection and student safety. The system verifies every AI-generated response before it reaches a learner, replacing probabilistic judgment with mathematically provable compliance.

The solution provides mathematically provable compliance evidence, a capability that education regulators require for adherence to the ST4S framework. Explore the PwC case study on Responsible AI in Education.

More industries adopting Automated Reasoning checks

Organizations across other regulated industries also adopt Automated Reasoning checks to strengthen compliance:

- Financial services (EU AI Act): Organizations classifying AI risk under the EU AI Act use Automated Reasoning checks to move from inconsistent manual review to formally verifiable, audit-ready compliance workflows. Learn how PwC and AWS build responsible AI with Automated Reasoning.

- Energy and utilities: Utility operators verify AI-generated outage classifications against North American Electric Reliability Corporation (NERC) and Federal Energy Regulatory Commission (FERC) regulatory requirements, with formal verification behind each dispatch decision. Watch the re:Invent talk with PwC on this use case.

- Pharmaceuticals and life sciences: Professional services firms build mathematically verifiable validation layers for AI-driven marketing content, verifying that content claims are grounded in approved source materials.

- Insurance: Insurance carriers build customer-facing chatbots that reason formally over policy language, providing verifiable coverage determinations rather than probabilistic approximations.

Conclusion

In this post, you learned how Automated Reasoning checks in Amazon Bedrock Guardrails deliver mathematically provable verification with audit-ready evidence. For teams building compliance assistants in regulated industries or adding a formal verification layer to existing AI workflows, this technology provides a path from probabilistic confidence to mathematical proof.

Automated Reasoning checks complement other AWS responsible AI capabilities, such as Knowledge Bases for Amazon Bedrock for retrieval-augmented generation, AWS Audit Manager for compliance tracking, and Amazon SageMaker AI for model governance.

Ready to get started?

- Get started: Explore the Automated Reasoning checks documentation for setup guidance.

- For a hands-on walkthrough, see How to minimize generative AI hallucinations with Automated Reasoning checks.

- Your chatbot can iteratively rewrite its answers using Automated Reasoning checks feedback, asking the user clarifying questions until it reaches a provably correct answer.

- To get started, see the Automated Reasoning checks rewriting chatbot reference implementation.

- An agent can walk a subject matter experts through the entire process of improving the Automated Reasoning policy in natural language; See our open-source sample of such an agent.

- See customer results: Amazon Logistics case study | PwC Responsible AI in Education case study | AWS Responsible AI resources

- More about Amazon Bedrock features: Amazon Bedrock Guardrails | Amazon Bedrock AgentCore

- Guidance and responsible AI best practices across AI lifecycle: AWS Well-Architected Responsible AI Lens

To discuss how Automated Reasoning checks can support your compliance use cases, contact your AWS account team. To prepare, identify your top 3 compliance workflows where AI outputs require formal verification.

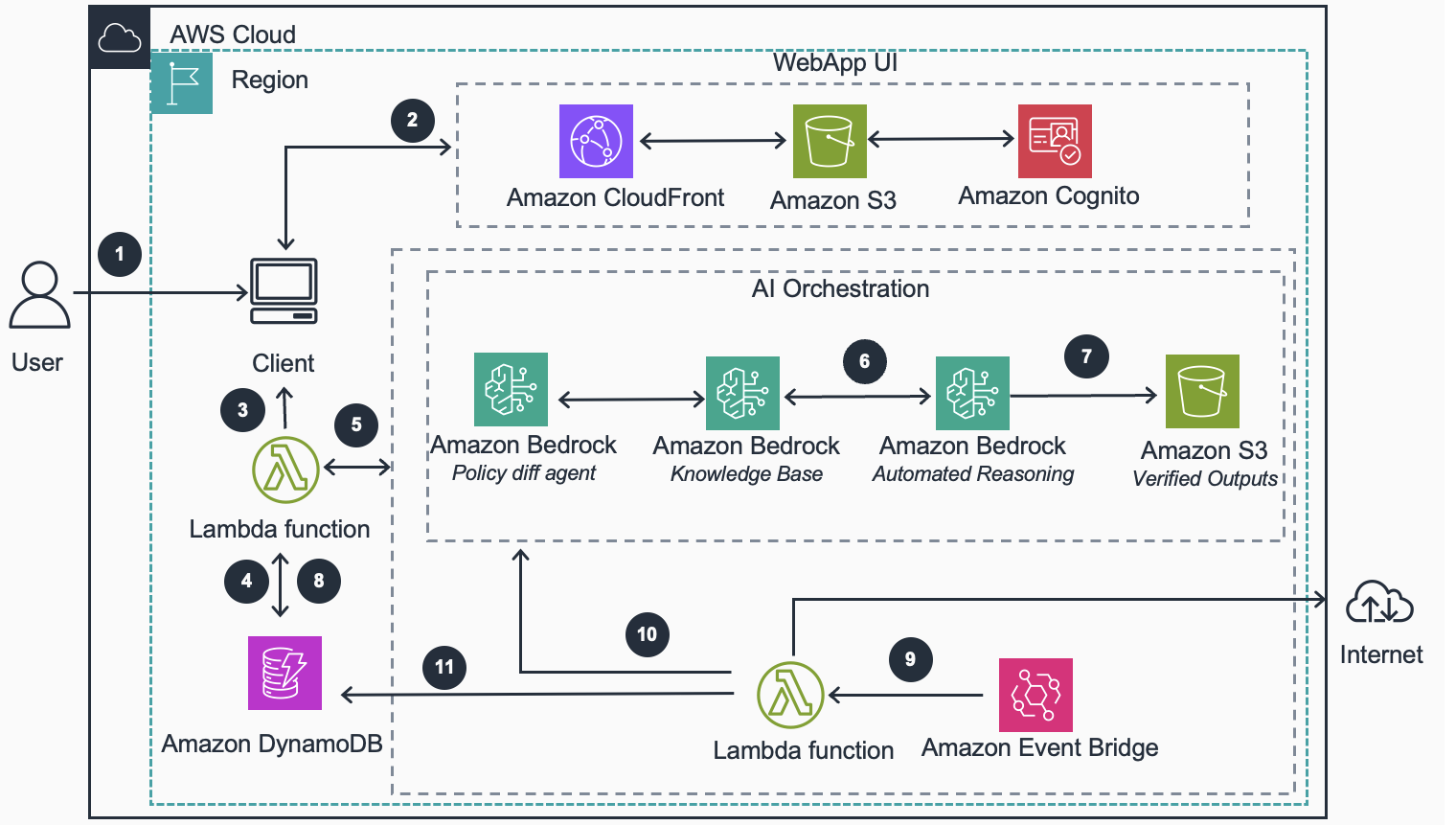

Figure 3: Reference architecture for compliance checks with Amazon Bedrock Automated Reasoning.

- User accesses the application via Amazon CloudFront, which serves the React front end from Amazon Simple Storage Service (Amazon S3) static hosting.

- Amazon Cognito authenticates the user and issues a JWT token. CloudFront enforces authentication on downstream requests.

- User submits a compliance check request specifying their region, facility type, and license category. CloudFront routes the request to AWS Lambda.

- Lambda queries the Amazon DynamoDB Rules Engine using region, facility type, and license category as the key. Returns the exact applicable regulatory rules.

- Lambda injects the rules into a prompt and calls Amazon Bedrock. The Knowledge Base provides retrieval-augmented generation (RAG) context from verified regulatory documents stored in Amazon S3.

- The generated compliance checklist is sent to Amazon Bedrock Automated Reasoning Checks (ARC), which compiles the rules into a formal logic model and mathematically verifies each item. This verification is provable, not probabilistic.

- Verified items are stored in Amazon S3 and returned to the user. Invalid items trigger corrective regeneration with the model (max 3 retries). Out-of-scope items are auto-excluded with reasoning.

- A second DynamoDB table stores customer facility profiles so hospitals can be looked up by ID without re-uploading data on every request.

- Amazon EventBridge Scheduler triggers a Lambda web crawler on a configurable schedule to scrape government regulatory websites for policy changes.

- Scraped content is sent to the Amazon Bedrock Policy Diff Agent, which detects what changed. Updated rules are written to DynamoDB and new documents are re-indexed into the Knowledge Base.

- Compliance reports with ARC verification proofs are stored in DynamoDB and accessible via the Reports Tab for audit trails, filtering, and downloads.

Acknowledgement

Special thanks to Suresh Kanan, Tonny Ouma, Laurie Kasper and Stefano Buliani who contributed to this work.

About the authors

Manuel Rioux est fièrement propulsé par WordPress